When Your Pipeline Decides: Automating Progressive Delivery Decisions

Picture this: it's 2 AM on a Saturday. Your team just triggered a release before heading home. The new version starts receiving 5% of production traffic. Five minutes later, error rates begin climbing. Nobody is watching the dashboard. By the time someone checks their phone in the morning, users have been hitting errors for hours.

This scenario is more common than most teams admit. Releases don't always happen during business hours. Teams have lives outside work. And waiting for a human to notice a problem before taking action creates unnecessary downtime.

The solution isn't to keep people on call forever. It's to give your pipeline the ability to make decisions on its own.

How Automated Gating Works

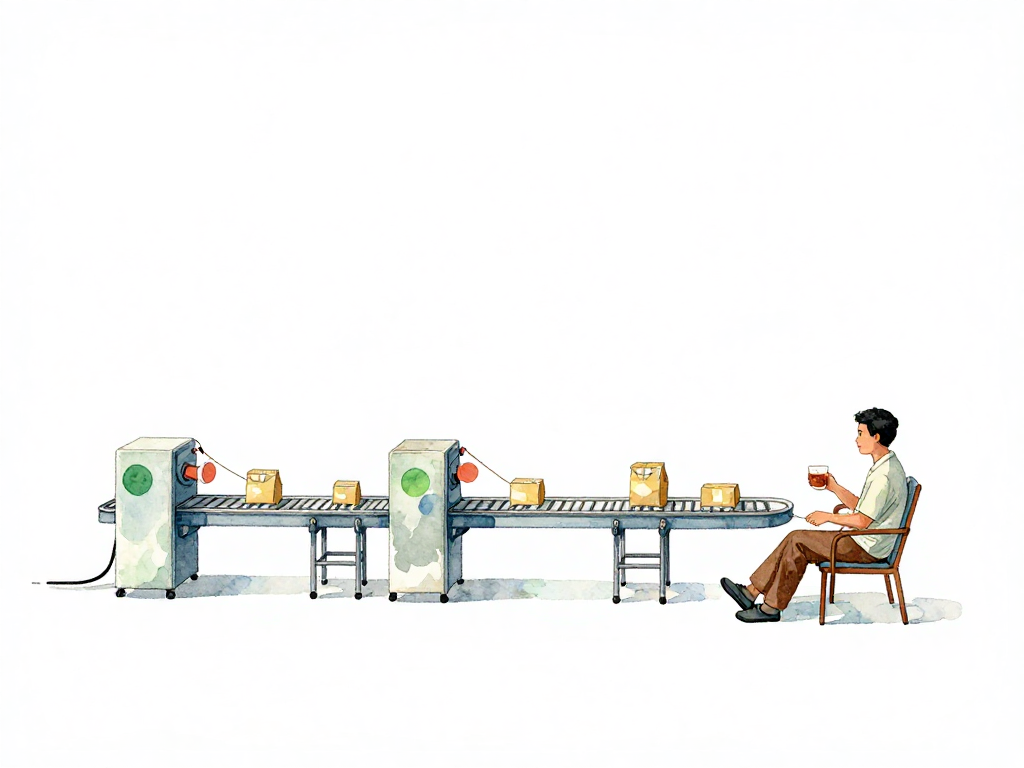

The core idea is straightforward. A progressive delivery pipeline has several stages, each exposing the new version to more traffic. Between each stage sits a gate. That gate checks real-time metrics against predefined thresholds before deciding what to do next.

Here's a concrete example. Your pipeline starts by routing 5% of traffic to the new version. It waits five minutes for data to accumulate. Then it checks three things:

The following flowchart illustrates the decision logic at each gate in the pipeline:

Here's what that gate configuration might look like in a YAML-based pipeline:

gates:

- name: canary-5pct

traffic: 5%

wait: 5m

checks:

- metric: error_rate

query: "sum(rate(http_requests_total{version='new', status=~'5..'}[5m])) / sum(rate(http_requests_total{version='new'}[5m]))"

warning: 0.005

critical: 0.02

action_warning: hold

action_critical: rollback

- metric: latency_p99

query: "histogram_quantile(0.99, sum(rate(http_request_duration_seconds_bucket{version='new'}[5m])) by (le))"

baseline: "histogram_quantile(0.99, sum(rate(http_request_duration_seconds_bucket{version='old'}[5m])) by (le))"

warning: 1.10

critical: 1.25

action_warning: hold

action_critical: rollback

This configuration checks two metrics after five minutes at 5% traffic. If the error rate exceeds 0.5% (warning), the pipeline holds. If it exceeds 2% (critical), it rolls back. Latency is compared to the old version's baseline, with similar thresholds.

- Is the error rate for the new version below 0.1%?

- Is latency within 10% of the old version's baseline?

- Are there no significant increases in 5xx responses?

If all metrics pass, the pipeline proceeds to the next stage, say expanding traffic to 20%. If metrics fail, the pipeline needs to decide what to do.

Three Possible Actions: Continue, Hold, or Rollback

Once a metric breaches a threshold, the pipeline has three options.

Continue means everything looks good. The new version moves to the next traffic tier. This is the happy path and requires no human intervention.

Hold means the pipeline stops progressing the release, but the new version keeps running at its current traffic percentage. The team gets notified and can investigate without pressure. This is useful when the problem isn't critical yet. Maybe error rates crept up slightly but aren't alarming. The team can check logs, look at traces, and decide whether to proceed or roll back.

Rollback means the pipeline immediately shifts all traffic back to the old version. This happens when metrics indicate a serious problem. Error rates spiking dramatically. All requests starting to fail. Latency degrading beyond acceptable limits. In these cases, waiting for human approval only makes things worse.

Setting Thresholds: Warning vs Critical

The decision between holding and rolling back depends on severity. Many teams implement two levels of thresholds.

A warning threshold triggers a hold. For example, if error rates reach 0.5%, the pipeline stops progressing and notifies the team. The new version stays at its current traffic level while someone investigates.

A critical threshold triggers an automatic rollback. If error rates hit 2%, the pipeline pulls the new version immediately. No waiting. No questions.

This two-tier approach gives teams breathing room for minor issues while protecting users from major failures. The exact numbers depend on your application's tolerance for errors. A payment system might have much stricter thresholds than a content website.

What the Pipeline Needs to Make Decisions

Automated decision-making requires three things working together.

First, your observability system must expose metrics in real time. The pipeline needs to query error rates, latency, and other signals immediately after shifting traffic. If your monitoring has a five-minute delay, the pipeline can't make timely decisions.

Second, the pipeline needs to compare new version metrics against the old version's baseline. This means storing baseline data from the previous stable release. The comparison should account for normal fluctuations. A 5% latency increase during peak hours might be normal, while the same increase during low traffic could indicate a problem.

Third, you need clear rules encoded in the pipeline configuration. These rules define which metrics to check, what thresholds to use, and what action to take for each threshold level. Keep these rules simple and explicit. Complex logic with multiple conditions becomes hard to maintain and debug.

Automation Doesn't Replace Humans

It's tempting to think automated decisions mean you can ignore releases entirely. That's not the case. Automation handles the predictable scenarios. Humans still need to:

- Define appropriate thresholds for each metric

- Review whether thresholds remain relevant as the application evolves

- Handle edge cases the automation doesn't cover

- Investigate holds and decide whether to proceed or roll back

- Update rules when new failure patterns emerge

Think of automation as a safety net for the common cases. It catches the obvious problems quickly, giving humans more time to focus on complex situations that require context and judgment.

A Quick Practical Checklist

Before implementing automated decision gates, verify these basics:

- Can your pipeline query metrics from your monitoring system in real time?

- Do you have baseline metrics from your current stable version?

- Have you defined warning and critical thresholds for error rate, latency, and 5xx responses?

- Does your pipeline have a mechanism to hold progression without rolling back?

- Are notifications configured to reach the right people when a hold or rollback occurs?

- Have you tested the automated rollback in a staging environment?

What Comes Next

Once your pipeline can decide when to continue, hold, or roll back, the next step is choosing how to distribute traffic. Two common approaches are canary releases and staged rollouts. Each has different characteristics for expanding a new version's reach. The right choice depends on your application's architecture and how your infrastructure handles traffic routing.

But that's a topic for another post. For now, focus on giving your pipeline the ability to make decisions when nobody is watching. Your users will thank you, and your team will sleep better.