When Database Migrations Need Their Own Pipeline

You have a solid CI/CD pipeline for your application. Code gets built, tests run, and deployments happen automatically. Then someone opens a pull request that adds a new column to the users table. Suddenly, the clean pipeline feels wrong. Running a database migration in the same flow as application code means either blocking the deployment until the migration finishes, or running the migration separately and hoping nothing breaks.

The problem is that database changes behave differently than application code. A failed build just means no new deployment. A failed migration can corrupt data, lock tables, or leave your database in an inconsistent state. You need a pipeline designed for the specific risks of schema changes, data backfills, and verification.

Why Application Pipelines Fall Short

Application pipelines follow a straightforward pattern: build, test, deploy. If a test fails, the pipeline stops. If deployment fails, you fix the issue and redeploy. The rollback is usually just deploying the previous version.

Database migrations break this model. A migration changes the structure or content of your data. Rolling back a migration is not the same as reverting a code change. You need to run a separate script to undo the schema change, and that script might fail if data has already been transformed. You also need to handle backfilling old records with new values, and verify that the data is consistent after the migration.

Trying to force database changes into the same pipeline as application code leads to compromises. Either you skip automated testing for migrations, or you run them manually during maintenance windows, or you accept the risk of running untested scripts in production.

A Separate Pipeline for Database Changes

The solution is to create a dedicated pipeline for database changes, completely separate from your application pipeline. This pipeline has its own stages, its own approval gates, and its own monitoring. It treats database changes as first-class deployments, not as side effects of application releases.

Here is how the stages work in sequence.

The following YAML snippet shows how you might define these stages in a GitHub Actions workflow:

name: Database Migration Pipeline

on:

pull_request:

paths:

- 'migrations/**'

jobs:

dry-run:

runs-on: ubuntu-latest

steps:

- run: ./scripts/dry-run.sh

migration:

needs: dry-run

runs-on: ubuntu-latest

steps:

- run: ./scripts/migrate.sh

backfill:

needs: migration

runs-on: ubuntu-latest

steps:

- run: ./scripts/backfill.sh

reconciliation:

needs: backfill

runs-on: ubuntu-latest

steps:

- run: ./scripts/reconcile.sh

rollback-test:

needs: reconciliation

runs-on: ubuntu-latest

steps:

- run: ./scripts/rollback.sh

- run: ./scripts/reconcile.sh

Each job runs only if the previous job succeeds, mirroring the pipeline flow described above.

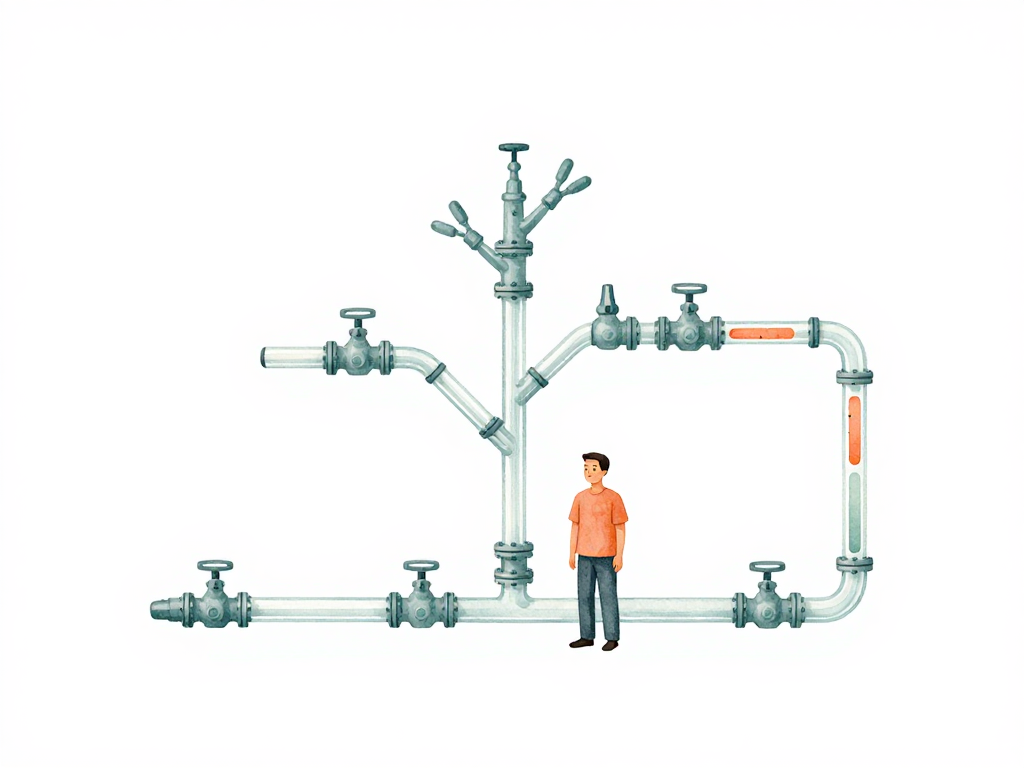

The following flowchart shows the five stages and their progression:

Stage 1: Dry-Run

Every time a new migration script enters your repository, the pipeline runs a dry-run against a staging database. The script executes but does not actually change anything. The goal is to catch syntax errors, missing dependencies, or logic problems before they touch real data.

If the dry-run fails, the pipeline stops immediately. The team gets a notification, and no further stages run. This catches most common mistakes early, when they are cheap to fix.

Stage 2: Migration

After the dry-run passes, the pipeline runs the actual migration against the staging database. This changes the schema or transforms the data, but still in a safe environment. The pipeline logs every step: start time, end time, number of rows affected, and any warnings.

These logs serve as an audit trail. When something goes wrong later, you can trace exactly what happened during the migration. You also have a record of how long each step took, which helps you estimate production run times.

Stage 3: Backfill

Some migrations require filling in data for existing records. For example, adding a new column with a default value might need to update millions of existing rows. Running this as one massive update can lock the table for minutes or hours.

The pipeline handles backfills in small batches, typically one thousand rows per iteration, with a short pause between batches. This keeps the database responsive and reduces the risk of long-running locks. The pipeline monitors each batch for duration and error rate. If a batch fails, the pipeline stops and sends an alert. It does not automatically retry, because the failure might indicate a deeper problem that needs investigation.

Stage 4: Reconciliation

After the migration and backfill complete, the pipeline runs a reconciliation script. This compares the data before and after the migration. The comparison can check row counts, checksums on specific columns, or aggregate values like total balances in a transaction table.

If the reconciliation finds unexpected differences, the pipeline fails. The team must investigate before proceeding. This stage catches silent data corruption, partial updates, or logic errors that did not cause a crash but still produced wrong results.

Stage 5: Rollback Test

The pipeline runs the rollback script to verify that the migration can be undone cleanly. After the rollback, it runs reconciliation again to confirm the data returned to its original state.

This is the most important stage for building confidence. If the rollback test passes in staging, you know you can safely undo the migration in production if something goes wrong. If it fails, the pipeline stops, and the migration is not allowed to proceed to production.

Running in Production

After all five stages pass in staging, the pipeline is ready for production. But the process is not automatic. A manual approval step sits between staging and production. Someone with database knowledge reviews the results and approves the production run.

In production, the pipeline runs the same sequence: dry-run, migration, backfill, reconciliation, and rollback test. The difference is that monitoring is tighter, and the pipeline can be stopped mid-stage if anomalies appear. Each stage in production also has its own rollback capability, so you can abort at any point without leaving the database in a broken state.

Practical Checklist for Your Database Pipeline

- Separate database pipeline from application pipeline

- Always run dry-run before actual migration

- Log every step with timestamps and row counts

- Run backfills in small batches with monitoring

- Add reconciliation checks after migration and backfill

- Test rollback scripts in staging before production

- Require manual approval for production runs

- Keep the ability to stop the pipeline mid-stage

The Takeaway

Database migrations deserve the same rigor as application deployments. A dedicated pipeline with dry-run, backfill, reconciliation, and rollback test stages gives you confidence that schema changes will not silently break your data. The extra stages add time to each migration, but they save far more time by preventing production incidents and the frantic recovery work that follows. Treat your database changes like production deployments, and your data will stay consistent through every update.